If something online is visible and verified, people often just assume it’s safe. Platforms like OnlyFans are frequently used as proof that the industry now gives women more autonomy and empowerment, offering a reassuring narrative of women taking control of their labor through digital platforms. This idea is appealing at face value, but in reality, it is deeply misleading. In other words, by treating this very visible sector of the market as representative of the market as a whole, rather than as one piece of a larger digital sex economy, public discourse and legal argument both fail to consider the conditions under which exploitation takes place.

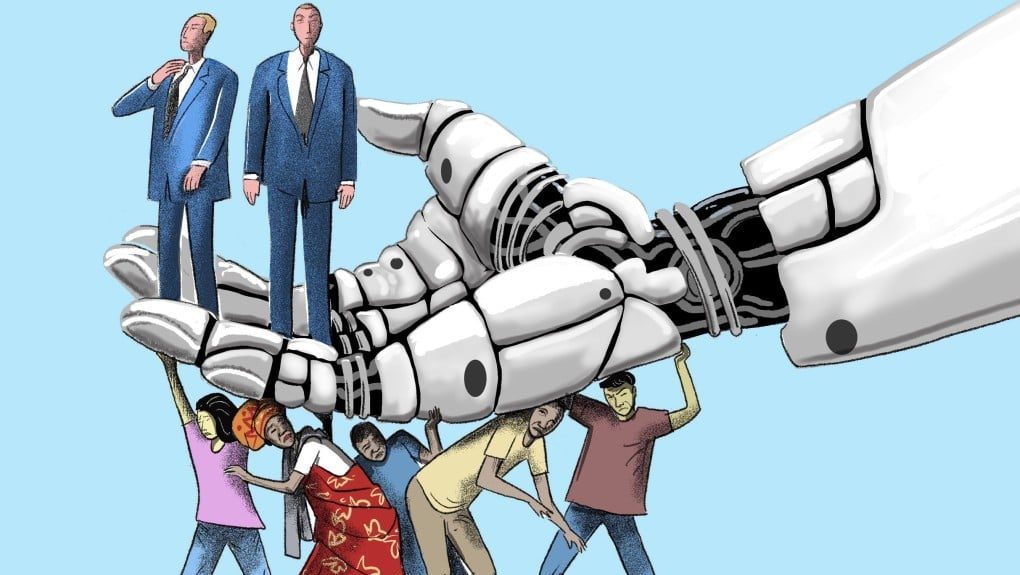

The digital sex economy remains dependent on a market in which women disproportionately supply sexual labor, while men disproportionately shape the conditions under which it is made and consumed. What complicates this issue is the notion of visibility being synonymous with empowerment. Even where participation is framed as individual choice, women’s bodies are commodified within systems in which control over income and access is frequently exercised by male intermediaries or reinforced by male consumer demand.

This same structure of control is also reinforced by consumer demand within digital sex markets, particularly on subscription-based platforms like OnlyFans, where the top 1% of creators capture roughly one-third of total revenue, and income is closely tied to retaining paying subscribers. Market incentives reward conformity to narrowly defined, male-oriented preferences, encouraging women to take on hyper-specific identities that lack alignment with their personal autonomy. These pressures limit the range of acceptable expression, effectively boxing women into roles that are profitable and increasingly difficult to refuse or exit. As demand narrows, so does agency: women who deviate from the male gaze risk economic loss and perhaps even exclusion from platform algorithms altogether. In this way, consumer-driven market dynamics intensify sexism by making gendered stereotypes into economic requirements. When economic authority and platform access are concentrated in the hands of intermediaries, women’s ability to actually refuse or renegotiate terms becomes constrained, even if consent is formally expressed. Because this participation is shaped by these market pressures, the resulting labor arrangements often appear voluntary when documented, though many times constrained by economic dependence and consumer demand.

The gendered character of this control particularly matters because it influences how coercion is interpreted by legal and regulatory systems. The consequences extend directly to law and enforcement. Legal and regulatory frameworks increasingly rely on platform self-regulation and documented participation as proxies for autonomy, even as coercion in digital markets rarely resembles overt force. Instead, exploitation often manifests itself through economic dependence and third-party control, dynamics that remain largely invisible within systems meant to detect clear violations as opposed to structural power. Survivor support organizations such as the Polaris Project, along with federal trafficking data, consistently show that 28% of cases involve emotional abuse, 26% involve economic control, and 23% involve threats. Cases usually involving financial control and manipulation are patterns that fall outside evidentiary models that most enforcement resources are equipped to detect. The resulting platform visibility ends up as a proxy for consent, reassuring the public while leaving enforcement poorly equipped to recognize exploitation when it no longer looks like abuse. The more visible digital sex markets become, the easier it is to mistake compliance for consent.

In less prominent parts of the digital sex economy, control over labor often operates through third-party intermediaries who exercise varying degrees of control over production and finances. These intermediaries—often male—include account managers or promotional agencies who control account access and handle revenue-generating interactions on behalf of creators, including using ghostwriters to impersonate creators to encourage spending. For example, some agencies operate multiple OnlyFans accounts at once, controlling content decisions while providing a portion of the overall revenue to the creator. This, therefore, results in actions that would otherwise raise concerns being simply considered entrepreneurial, preserving the appearance of autonomy while undermining its substance.

In practice, enforcement when it comes to digital sex markets is increasingly mediated through platform self-regulation instead of direct public oversight. These high-visibility platforms are reliant on verification systems that operate on the premise of complaint-based moderation and age and identity confirmation, all of which are considered sufficient safeguards against exploitation. Yet regulators have repeatedly found these safeguards to be unstable. For example, the U.K. communications regulator Ofcom fined OnlyFans after discovering failures in how the platform implemented and disclosed its age verification system, which included inaccuracies in its facial-age estimation thresholds. More broadly, research on platform moderation shows that automated systems frequently fail to detect harmful content and produce inconsistent enforcement outcomes, with major gaps in identifying non-obvious forms of abuse. Because platforms are neither equipped nor incentivized to investigate exploitative relationships, these relationships persist even when there is documented formal consent, and the platform rules are technically satisfied.

These limitations are reinforced by Section 230 of the Communications Decency Act, which grants platforms broad intermediary immunity for user-generated content. While this immunity reflects longstanding concerns in regards to free expression and the dangers of online speech overregulation, it severely reduces legal incentives for platforms to proactively investigate coercion that does not convey itself as a clear content violation. As a result, responsibility for identifying trafficking is effectively outsourced to private actors, specifically platform moderation systems, whose tools are reliant on automated flagging and user complaints and are not suitable for detecting the kinds of exploitation that make up much of the digital sex economy.

Treating high-visibility platforms as representative of the digital sex economy as a whole offers a convenient and empowering narrative of autonomy, but it completely obscures the structural conditions under which exploitation continues. By relying on platform self-regulation and intermediary immunity, current legal systems fail to identify coercion that operates through economic dependence and informal authority. These enforcement failures are not gender-neutral; they are reflective of long-standing assumptions about women’s labor and consent that still allow certain forms of male control to appear voluntary rather than exploitative. Until enforcement methods recognize the realities of power and dependence in these digital labor markets, compliance will keep being mistaken for consent.

• • •

Sahana Patel is a sophomore, studying Political Science, Security, and Technology, as well as Philosophy. She is involved with the Tepper Undergraduate Real Estate Club, Carnegie Mellon’s Pre-Law Society, and research on campus.

Leave a Reply